Every enterprise AI initiative eventually hits the same wall. The models are trained. The pipelines are built. The business case is approved. And then the infrastructure bill arrives — and it looks nothing like what was projected.

The culprit is almost always the same: static compute. Organisations provision infrastructure for peak demand, then leave it running at idle 80% of the time. In machine learning environments, where training jobs are bursty, inference loads are unpredictable, and experimentation cycles are continuous, this approach is financially unsustainable and operationally rigid.

Just-in-time (JIT) compute is the architectural response to this problem. It is not a single product or platform — it is a design principle: provision compute precisely when it is needed, at exactly the scale required, and release it the moment the workload completes. Applied correctly across an MLOps platform, it can reduce infrastructure costs by 40–70% while simultaneously improving throughput and deployment velocity.

Why static infrastructure fails ML workloads

Traditional infrastructure was designed for steady-state workloads — web servers, databases, application tiers. These systems benefit from always-on provisioning because their demand curves are relatively predictable and continuous.

Machine learning workloads are structurally different. A model training job may require 32 GPUs for four hours, then nothing for two days. A batch inference pipeline may need 200 CPU cores every night at midnight, then zero during business hours. A feature engineering job spikes when new data arrives, then terminates. Applying always-on provisioning to this profile wastes capital at a rate that compounds with every new model added to the portfolio.

According to a 2025 Gartner AI report, over 85% of ML projects fail to reach production — and of those that do, fewer than 40% sustain business value beyond 12 months. Infrastructure cost and operational complexity are consistently among the top cited reasons. Teams that cannot iterate cheaply cannot iterate at all.

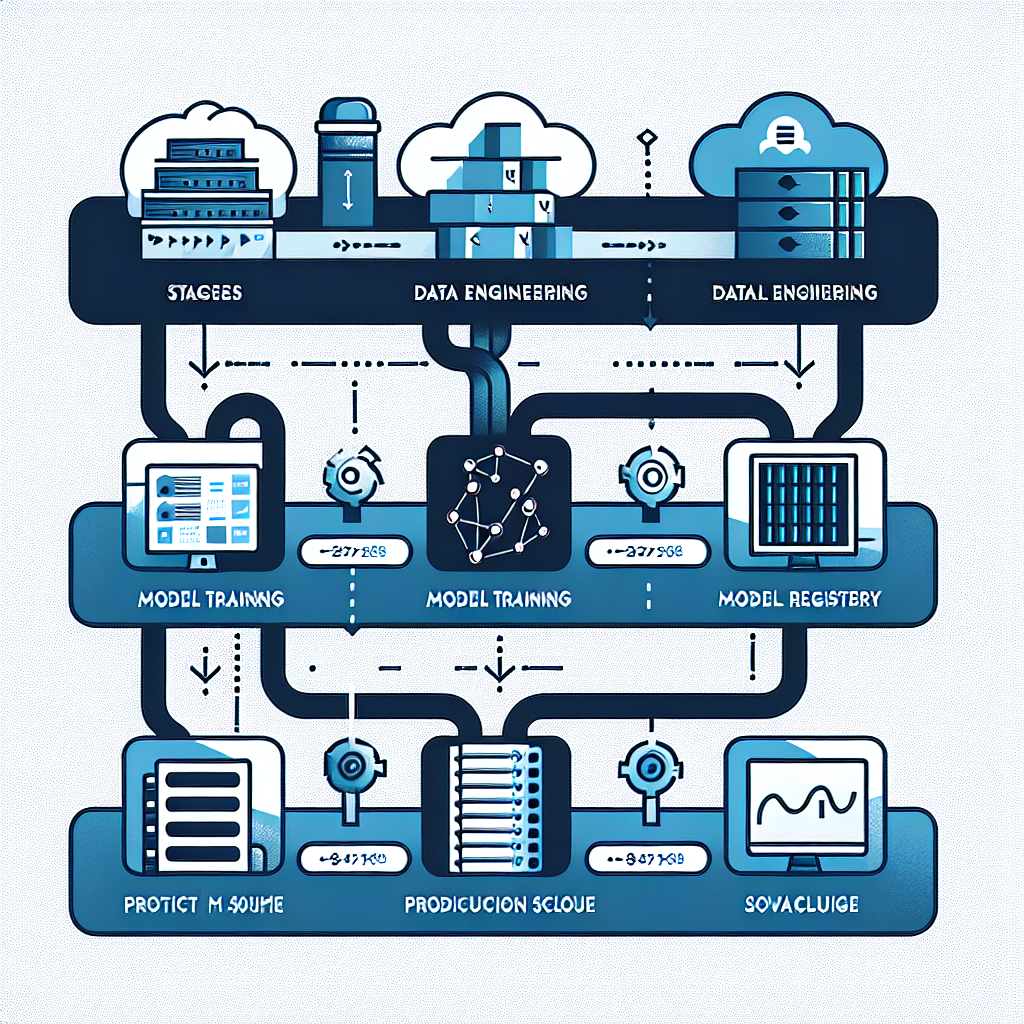

The three compute layers of an MLOps platform

Before designing for JIT compute, it helps to understand where compute is actually consumed in a production MLOps environment. There are three distinct layers, each with different scaling characteristics.

1. Training infrastructure

Model training is the most compute-intensive and most bursty layer. Jobs are triggered by events — new data arriving, a scheduled retraining cycle, or a data scientist launching an experiment. They run for minutes to hours, consuming significant GPU or CPU resources, then terminate completely. This is the highest-value target for JIT compute: provision a cluster at job start, tear it down at completion.

On Kubernetes-based platforms, this means using job schedulers like Kubeflow Pipelines or Argo Workflows with node auto-provisioning enabled. On AWS, SageMaker Training Jobs do this natively — compute spins up, the job runs, the instance terminates, and you are billed only for the runtime duration.

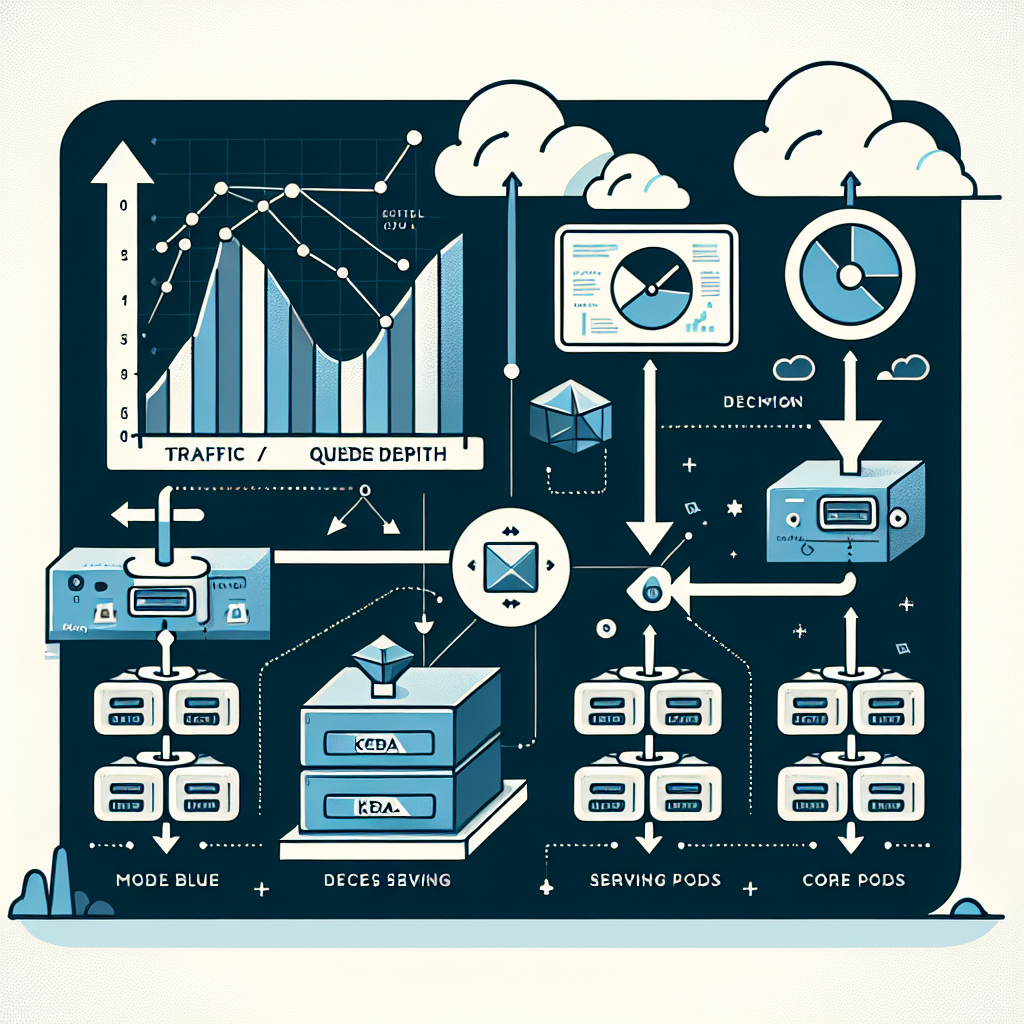

2. Serving infrastructure

Model serving has a different profile. Online inference endpoints require low latency and must be always available — but their load varies significantly across the day, week, and product cycle. A recommendation engine may see 10x traffic during peak hours compared to overnight. A fraud detection model may idle for hours between bursts of transaction activity.

JIT compute for serving means horizontal pod autoscaling (HPA) tied to request queue depth, combined with KEDA for event-driven scaling from message queues or custom metrics. The goal is to maintain a minimum viable serving fleet at baseline, then scale out rapidly when demand arrives and scale back in when it subsides — without manual intervention.

3. Pipeline and batch infrastructure

Feature engineering, data validation, batch inference, and model evaluation pipelines are scheduled or event-triggered workloads. They do not require always-on infrastructure — they require reliable, fast provisioning on demand. This layer maps cleanly to serverless compute (AWS Lambda for lightweight transforms, Fargate for containerised batch jobs) or Kubernetes CronJobs with cluster autoscaler enabled.

What just-in-time compute looks like in practice

The architecture for a JIT-capable MLOps platform is not dramatically different from a standard Kubernetes-based setup. The difference is in the configuration choices made at each layer.

Three configuration decisions matter most. First, enable the Kubernetes Cluster Autoscaler with aggressive scale-down settings — the default 10-minute idle window before node termination is too conservative for ML workloads. In most environments, a 3–5 minute window is safe and meaningfully reduces cost. Second, use spot or preemptible instances for all training jobs. AWS SageMaker offers up to 64% discounts through reserved and spot instance models — and training jobs, being restartable by design, are ideal candidates for preemptible compute. Third, instrument every workload with cost tags from day one. Without per-model, per-team cost visibility, optimisation is guesswork.

LLM workloads change the equation

The emergence of large language model workloads in production has added new complexity to the JIT compute problem. LLM inference has fundamentally different compute characteristics — GPU vs. CPU decisions, memory-intensive key-value caches, and highly variable latency that depends on both input and output length. These characteristics make naive autoscaling ineffective.

For LLM serving specifically, JIT compute requires a different approach. Rather than scaling on CPU or memory metrics, you scale on token throughput and queue depth. Tools like vLLM and TGI (Text Generation Inference) expose these metrics natively. KEDA can consume them as custom metrics to drive horizontal scaling decisions that are actually correlated with model load — not just system resource utilisation.

The other LLM-specific consideration is cold start latency. GPU nodes take 3–5 minutes to provision and warm up. For latency-sensitive serving, this means maintaining a minimum fleet of warm GPU nodes even during low-traffic periods — a deliberate and justified exception to pure JIT provisioning, and one that must be factored into cost models.

The business case for getting this right

The financial argument for JIT compute is straightforward, but the operational argument is equally compelling. In 2026, AI budgets have tripled in regulated industries including finance, healthcare, and manufacturing — but scrutiny of ROI has tripled alongside them. Infrastructure that cannot demonstrate cost efficiency will not survive budget reviews.

Beyond cost, JIT compute directly enables faster experimentation. When data scientists know that spinning up a 16-GPU training cluster costs them nothing if they cancel after 20 minutes, they experiment more aggressively. When a new model variant can be deployed to a serving endpoint in minutes without a capacity request, the feedback loop between experimentation and production shortens dramatically. This velocity compounds: teams that can iterate faster build better models faster, and the business value gap between them and slower competitors widens with every sprint.

Where to start

If you are building or refactoring an MLOps platform today, the highest-leverage first step is a compute audit. Map every workload in your ML environment to one of three categories: always-on (justified), scheduled (convertible to JIT), or event-driven (already JIT or should be). In most organisations I have worked with, 60–70% of ML compute falls into the second category — scheduled workloads running on always-on infrastructure that could be converted with relatively low engineering effort.

From there, prioritise training infrastructure first. The savings are largest, the risk of disruption is lowest (training jobs are restartable), and the engineering effort is manageable. Serving infrastructure comes second, and pipeline infrastructure — often the easiest — can run in parallel.

The goal is not to eliminate all static provisioning. Some workloads genuinely require always-on infrastructure. The goal is to make that a deliberate, justified architectural decision — not the default.